Accidental DDOS by desktop news aggregator

By: Philipp Kamps | June 28, 2012 | Web solutions

Since the beginning of 2012, for one of our clients with a big news website, we recognized a drastic increase in website traffic without an accompanying increase in ad impressions. Under normal conditions, an increase in traffic would be a positive sign; however, in this case it was caused by end user software that turned normal web users into aggressive web crawlers. This essentially created an accidental but consistent distributed DoS (denial of service) attack.

Here, we explain how we identified the cause and mitigated its effects.

Upon examining the access log files, we discovered that the extra traffic (between 30-80%) had the following user agent string:

Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.9.0.10) Gecko/2009042316 Firefox/3.0.10 (.NET CLR 3.5.30729)

This is strange because Firefox 3.0.10 is a very old (more or less extinct) browser version. Looking closer at the traffic patterns, we came to the conclusion that the traffic was not coming from real website visitors but from a web crawler. We shared our findings on serverfault.com and found a few other website owners having exactly the same strange traffic patterns on their websites. The source IP addresses were distributed around the world. Tracing back the IP addresses mainly revealed Internet end users, which is uncommon for a normal web crawler. At that point, we were not able to identify the root cause, so we decided to block all requests coming from that specific user agent string.

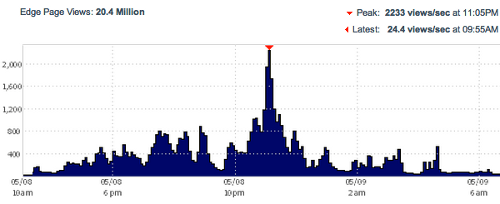

The following graph shows the traffic for one day in May 2012. There were over 20 Million page views with a peak of over 2000 requests per second.

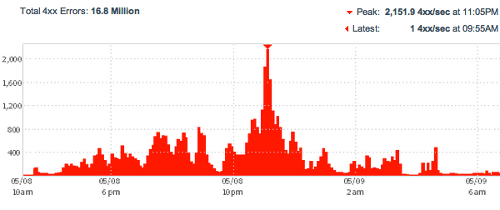

For that day you can see that the majority (~82%) of page requests got blocked because they used that specific user agent string.

Blocking the web crawler was very effective. Unfortunately, it didn't last very long:

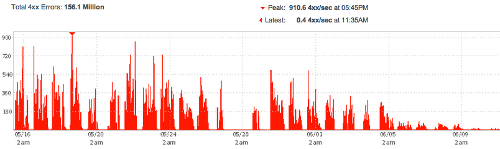

- before June, we were transferring up to 1TB of data per day and blocking between 30-80% page requests

- in mid June, we were transferring up to 2TB, which doubled our bandwidth costs

The following graphs shows how blocking the crawler became less effective.

Help came from dflaw (a serverfault user). He found out that the crawler was using a new user agent string:

Mozilla/5.0+(compatible;+Genieo/1.0+http://www.genieo.com/webfilter.html)

Luckily, this unique user agent string also pointed us to the root cause: Genieo. Genieo provides a software product for Windows and Mac computers. In short, it builds a personalized homepage containing recent news headlines. When installed, it runs in the background and constantly browses the Internet for news that you might be interested in. Basically, it runs a web crawler on your computer that connects to news websites directly. You can use the "systray" icon to stop the background browsing / crawling.

Currently, the web traffic caused by Genieo is very high because every single computer that has the Genieo software installed is busy crawling the Internet at a high rate. The Genieo product looks interesting and could potentially drive some real visitors to news websites. It is also obviously a useful product to have developed a user base. However, the rate of crawling and the resulting extra bandwidth costs forced us to make the unfortunate decision to block Genieo again.

We contacted Genieo and they told us that a recent updated did reduce the amount of web traffic caused by their software. They are aware of the issue, and have planned future improvements that will reduce the amount even more. We can already confirm that traffic from Genieo has dropped.

For us, it is important that Genieo identifies their crawler by a unique user agent string. This allows us to measure and control it if deemed necessary. Unfortunately, it doesn't look like Genieo is very consistent about their user agent string yet. We found the following user agent strings:

For HTML documents:

- Mozilla/5.0+(compatible;+Genieo/1.0+http://www.genieo.com/webfilter.html)

- Mozilla/5.0 (Windows NT 6.1; rv:11.0) Gecko/20100101 Firefox/11.0

- Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.9.2.3) Gecko/20100401 Firefox/3.6.3 (.NET CLR 3.5.30729)

For binary files such as images:

- Java/1.6.0_30

- Java/1.6.0_33

(This probably depends on the version of Java that each user has installed.)

We shared our results with Genieo and they have promised to fix the user agent string soon. We'll update this blog post as soon as Genieo has implemented changes.